Most organizations using AI have no structured way to evaluate which model to trust. With multiple systems producing different answers to the same prompt, enterprises need a repeatable evaluation framework — a trust layer — that measures consistency, predictability, and factual alignment across models before deploying them in production. Without one, high-stakes decisions rest on unverified AI outputs.

Most organizations using AI have no structured way to evaluate which model to trust. With multiple AI systems producing different answers to the same question, enterprises need a repeatable evaluation framework, a trust layer, that measures consistency, predictability, and factual alignment across models before deploying them in production. Without one, organizations are making high-stakes decisions based on unverified AI outputs.

Teams today have access to more AI models than ever: ChatGPT, Claude, open source alternatives, embedded copilots across enterprise tools. Ask the same question across two or three of them and you’ll often get different answers.

So which one do you trust?

The data suggests most people haven’t figured that out. A 2025 Pew Research Center study surveying over 5,000 US adults and 1,000 AI experts found that only a quarter of the public believes AI will benefit them personally, while nearly 60 percent say they have little or no control over whether AI is used in their lives. Majorities in both groups say they don’t trust the government or private companies to regulate it responsibly.

For most organizations, there’s no clear answer. More importantly, there’s no clear process.

Why does AI confidence create risk?

AI confidence creates risk because fluency mimics accuracy. Large language models produce outputs that are grammatically polished, well-structured, and assertive, regardless of whether the underlying information is correct. This makes errors harder to detect than in systems that signal uncertainty, and it means organizations can act on wrong answers without realizing it.

Fluent doesn’t mean accurate. Confidence doesn’t mean correctness. Today AI is more likely to be overconfident rather than hallucinate outright.

At small scale, humans can catch obvious errors. At enterprise scale, where AI cost compounds quickly, they can’t. The real issue isn’t bad answers. It’s believing AI outputs without verification.

What is the missing layer in enterprise AI?

The missing layer in enterprise AI is structured evaluation: the ability to systematically test, compare, and score AI outputs before relying on them for decisions. Most organizations have adopted AI tools and prompting practices but have not built the capability to evaluate whether the answers they receive are reliable.

Most organizations have:

- Tools

- Use cases

- Prompting practices

What they don’t have:

- Structured evaluation: systematic methods to assess output quality

- Repeatable testing: controlled conditions for comparing results

- Cross-model comparison: running the same inputs through multiple models to detect divergence

We’ve optimized for generating answers. We haven’t built the capability to evaluate them.

What does AI trust actually require?

AI trust requires three measurable properties: consistency of outputs across similar scenarios, predictability of behavior when inputs vary, and alignment with verifiable facts. Organizations that evaluate models on these criteria, rather than brand reputation or benchmark scores, can make evidence-based decisions about which AI to deploy.

Trust isn’t about:

- Brand reputation

- Model size

- Benchmark scores

Trust is about:

- Consistency: does the model give similar answers to similar questions?

- Predictability: does behavior change when inputs change slightly?

- Factual alignment: can the output be verified against known facts?

Trust isn’t a feature of the model. It’s a capability your organization needs to build.

How should organizations evaluate AI models?

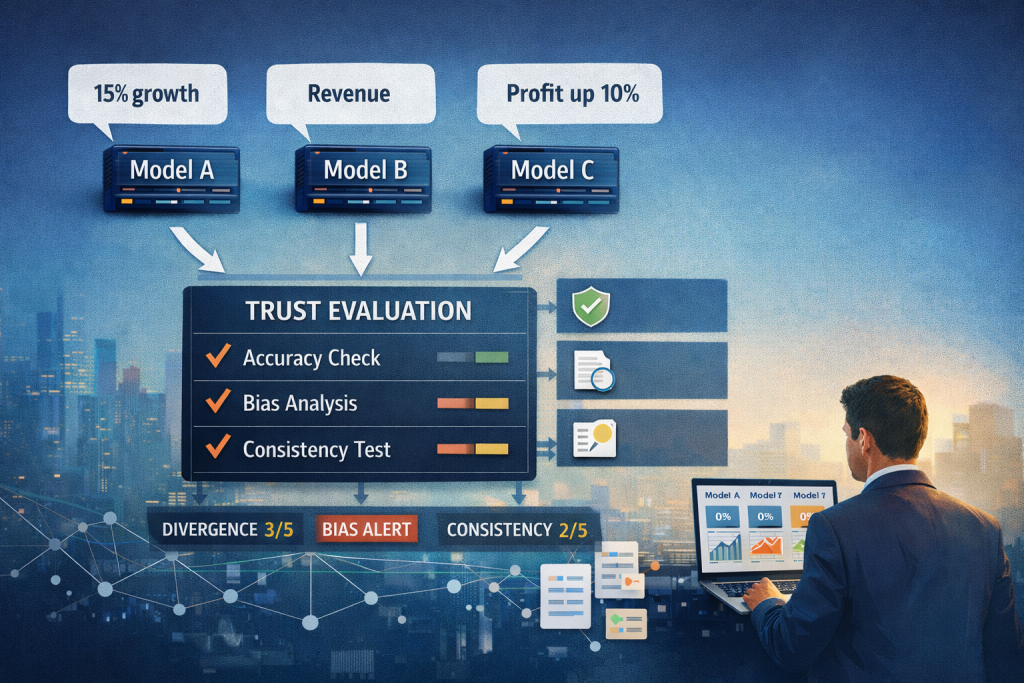

Organizations should evaluate AI models by running identical prompts across multiple models using structured, repeatable inputs, then comparing outputs for divergence and applying a scoring framework to assess quality. This process, sometimes called a trust layer or AI evaluation framework, replaces ad hoc testing with systematic, evidence-based model selection.

At a minimum, an AI evaluation process should include:

- Run the same prompt across multiple models

- Use structured, repeatable inputs with controlled variables

- Compare outputs for divergence, factual accuracy, and completeness

- Introduce lightweight scoring or referee judgment to rank results

Here’s how traditional AI selection compares to structured evaluation:

| Traditional AI Selection | Structured AI Evaluation |

|---|---|

| Pick a model based on brand or benchmarks | Test multiple models against your actual use cases |

| Evaluate based on a few manual tests | Run repeatable, controlled evaluations at scale |

| Trust the output because it sounds right | Score outputs for consistency, accuracy, and divergence |

| Single model, single answer | Multi-model comparison with referee judgment |

| No record of why a model was chosen | Documented evaluation trail for governance |

The goal isn’t to find the “best” answer. It’s to understand where models disagree, drift, or fail.

What is a trust layer for AI?

A trust layer is an evaluation framework that sits between AI model outputs and organizational decisions. It captures, compares, and scores responses from multiple models in parallel, giving teams visibility into where models agree, where they diverge, and which outputs are most reliable for a given use case. This is the gap most organizations haven’t addressed yet.

I’ve been building ModelTrust to tackle it directly:

- Run prompts across multiple models in parallel

- Capture structured, comparable outputs

- Analyze consistency, sentiment, and divergence

- Apply a referee layer to judge responses efficiently

Three principles guide the approach:

- Repeatability: same inputs, controlled runs

- Comparability: normalized outputs across different models

- Efficiency: evaluate at scale, not manually

Because if you can’t evaluate models systematically, you can’t deploy them with confidence.

If your AI strategy includes deploying models across teams or business functions, a trust layer isn’t optional. It’s foundational. Reach out if you’d like to get early access. Accepting beta applications now.