Canada committed roughly $890M to AI supercomputing infrastructure, but builders still hit fragmented vendors, opaque pricing, and an unresolved trade-off: the most usable compute in Canada is the least sovereign, and the most sovereign is the least usable. Presence is not sovereignty. Closing that gap will take more than infrastructure spending. It needs transparent pricing, real access models, and a common lens for comparing options that don’t share a billing unit.

I didn’t set out to map Canada’s AI compute landscape. I set out to build on it.

While working on Zeever.ca, including a Toronto.ca prototype testing sovereign AI against municipal data, I needed to make a series of unglamorous infrastructure decisions. Vector RAG or GraphRAG. Canadian-hosted inference or global providers. Local consumer GPUs or cloud compute. Together.ai, Fireworks.ai, or one of the names you only hear about in procurement decks.

What I learned in a few weeks of building is something most Canadian executives don’t yet have to confront directly: affordable, scalable, Canadian-hosted AI compute is genuinely hard to access. You can build something. You can make it work. But doing it cost-effectively, at scale, and inside Canadian jurisdiction is a different problem entirely.

A National Priority With a Visibility Problem

This isn’t just a builder’s complaint anymore. The federal Spring budget committed roughly $890M toward AI supercomputing infrastructure, which signals that Ottawa now treats sovereign compute as a national capability question rather than an industrial policy footnote.

That investment matters. But it raises a question that nobody seems able to answer cleanly: what does Canada’s AI compute capacity actually look like today? Where are the GPUs, who owns them, who can access them, and what do they cost? I went looking for that answer and found there isn’t one. Not in any usable form.

Why I Built the Landscape

Canada has a paradox that’s been written about for years. We are talent-rich and increasingly compute-constrained. The signals of investment are real, including sovereign compute strategy, public and private data centre expansion, and a growing menu of AI adoption programs. From a builder’s seat, none of that translates into something you can plan against. Vendor information is fragmented, pricing is opaque, sovereignty boundaries are unclear, and access pathways are inconsistent across providers.

So I built what I needed and couldn’t find. The result is at zeever.ca/canadas-ai-compute-landscape.

The Real Problem: You Can’t Compare What You Can’t See

I assumed there would be a clean dataset somewhere. Vendor, GPU types, regions, pricing, access models. Standard stuff. There isn’t. What exists instead is a patchwork of fragmented vendor disclosures, opaque enterprise pricing (especially from the telcos), mixed billing models that switch between tokens, GPU hours, and contract envelopes, and a lot of missing data that everyone politely pretends isn’t missing.

Even the basic question, what does this actually cost, is hard to answer for most providers in this country.

Building a Methodology That Works

To make any of this comparable, I needed to normalize systems that weren’t designed to be compared. Three principles shaped the approach.

The first was normalizing across billing models. Cohere prices in tokens. CoreWeave in GPU hours. The telcos price in contracts that don’t leave the room. So I introduced an AI Compute Index, a directional way to compare cost efficiency across pricing models that aren’t structurally compatible. It isn’t perfect. It’s a lens, not a verdict. But it makes a conversation possible.

The second was separating access from capability. Having GPUs in Canada does not mean you can use them. So the landscape tracks infrastructure presence separately from real-world access models, whether that’s API, cloud, enterprise contract, or private deployment. This turned out to be the most important distinction in the whole exercise.

The third was treating sovereignty as a first-class variable, not a marketing asterisk. Data residency, jurisdiction, and ownership all matter, and they don’t always move together. The landscape explicitly tracks Canadian hosting, ownership and control, and the trade-offs that come with each.

What the Data Actually Says

Two findings stood out, and both should sharpen how Canadian executives think about their AI roadmap.

The first is that Canada has compute, but it doesn’t have control. The infrastructure footprint is real. Much of it is foreign-owned or tied to non-Canadian platforms. Truly Canadian-controlled AI compute, the kind a CTO could point to and say we own this stack end to end, is limited. Presence is not sovereignty.

The second is that access is the real constraint, not supply. The most usable platforms are API-driven, globally distributed, and largely non-sovereign. The most sovereign options are less accessible, more enterprise-oriented, or not productized in any meaningful way. The trade-off is uncomfortable and worth saying plainly: in Canada today, the most usable compute is the least sovereign, and the most sovereign compute is the least usable. That is the gap the $890M needs to close, and infrastructure spending alone won’t do it.

Why Vendor Comparison Is So Hard

The hardest part of this project wasn’t gathering data. It was making it comparable. Pricing opacity is the first wall. Bell and Telus publish little usable pricing, enterprise contracts distort any comparison you try to make, and some vendors publish nothing at all. Some data points have to stay marked unknown if the landscape is going to remain honest.

The second wall is unit mismatch. Tokens against GPU hours. Throughput against latency. Managed APIs against raw infrastructure. There is no universal unit, and pretending there is would make the landscape less useful, not more.

The third is that this is a moving target. New GPU deployments, new Canadian regions, and constant pricing changes mean any snapshot ages quickly. This is version one of an ongoing exercise, not a finished product.

What I’d Tell Another CTO

Four things came out of this work that I’d want any technology leader weighing a Canadian AI strategy to hear directly.

The compute gap in this country is about access, not supply. Canada doesn’t just need more GPUs. It needs better access models, transparent pricing, and developer usability that doesn’t require an enterprise sales cycle to evaluate. The sovereignty versus usability trade-off is the defining tension in Canadian AI right now, and it’s unresolved at the policy level, the vendor level, and the architecture level. Anyone telling you otherwise is selling something.

Transparency is going to be a competitive advantage for whichever Canadian provider decides to lead with it. Right now it’s hard to compare vendors, hard to estimate cost, and hard to plan architecture, and that friction slows everything down. And we need better ways to normalize compute comparisons, because without a common lens, decisions default to familiarity or marketing. The AI Compute Index is an early attempt at that lens. I’d like to see better ones.

Final Thought

Canada is investing heavily in AI infrastructure, and that’s necessary. It isn’t sufficient. We also need accessible compute, transparent pricing, and a way to compare options that doesn’t require building a spreadsheet from scratch every time a CTO asks a reasonable question. Until that exists, even understanding the landscape will be harder than it should be.

That’s why this map matters. Not because it’s complete, but because the absence of one is itself a finding.

Frequently Asked Questions

How many AI compute providers does Canada actually have?

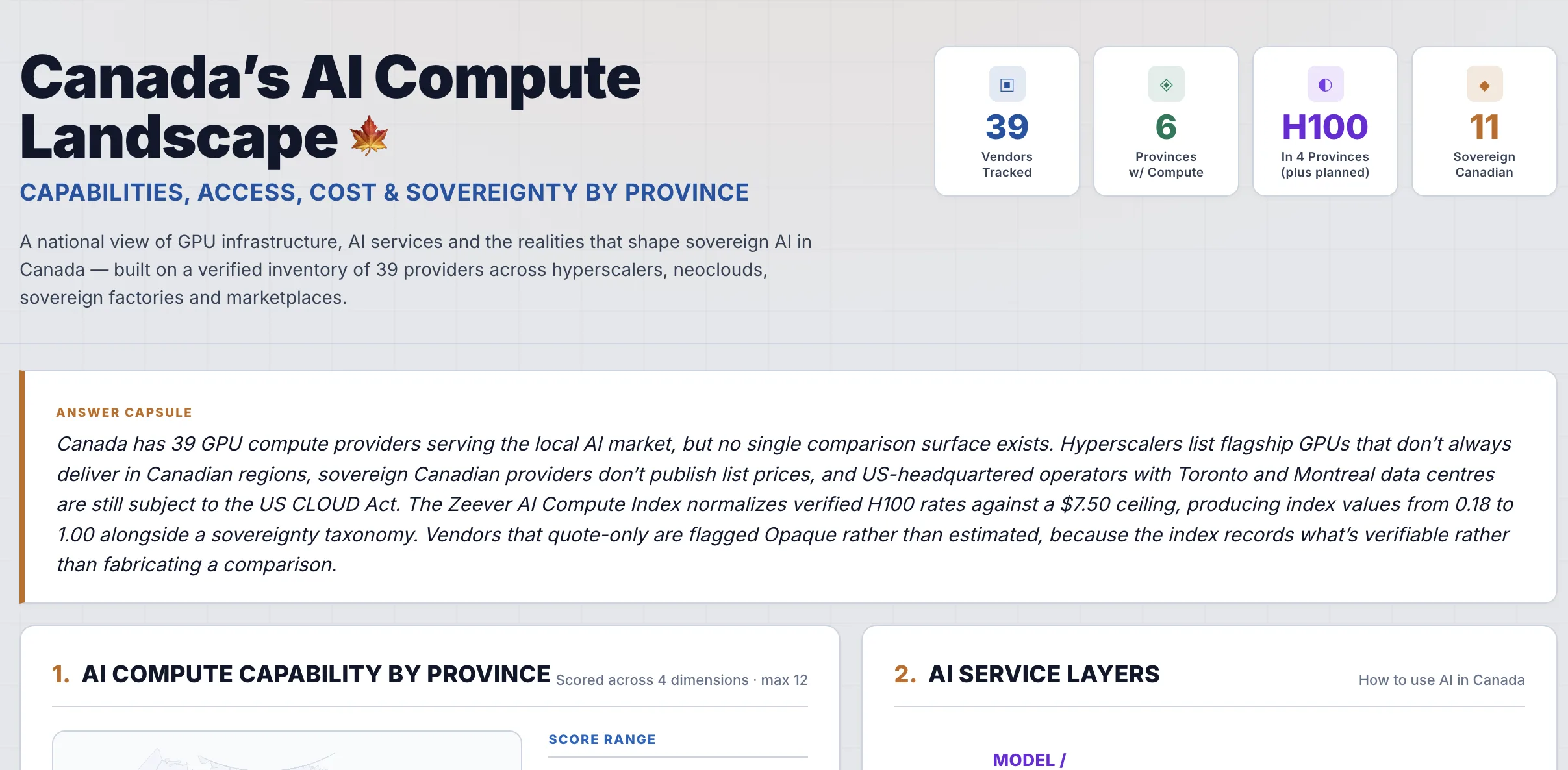

The Zeever landscape tracks 39 verified providers serving the Canadian AI market, spread across hyperscalers, neoclouds, sovereign factories, and marketplaces. Eleven of those are sovereign Canadian providers, with H100 GPU availability concentrated in four provinces.

What is the AI Compute Index?

The AI Compute Index is a directional way to compare cost efficiency across providers that price in incompatible units. Verified H100 rates are normalized against a $7.50 ceiling to produce index values from 0.18 to 1.00. Vendors that only quote on request are flagged Opaque rather than estimated, because the index records what’s verifiable rather than fabricating a comparison.

Is having Canadian data centres the same as sovereign AI?

No. A US-headquartered operator running Toronto or Montreal data centres is still subject to the US CLOUD Act, regardless of where the racks physically sit. Sovereignty depends on jurisdiction, ownership, and control, not just data residency.

What’s the biggest constraint on Canadian AI right now?

Access, not supply. The most usable platforms are API-driven and largely non-sovereign. The most sovereign Canadian options tend to be enterprise-only, lightly productized, or unavailable without a sales cycle. Closing that gap requires better access models and pricing transparency, not just more GPU capacity.

How much has Canada committed to AI supercomputing infrastructure?

The federal Spring budget committed roughly $890M toward AI supercomputing infrastructure, signalling that sovereign compute is now treated as a national capability question rather than an industrial policy footnote.